Why AI is not replacing engineers, it's optimizing dev team structures

The Governance Singularity:

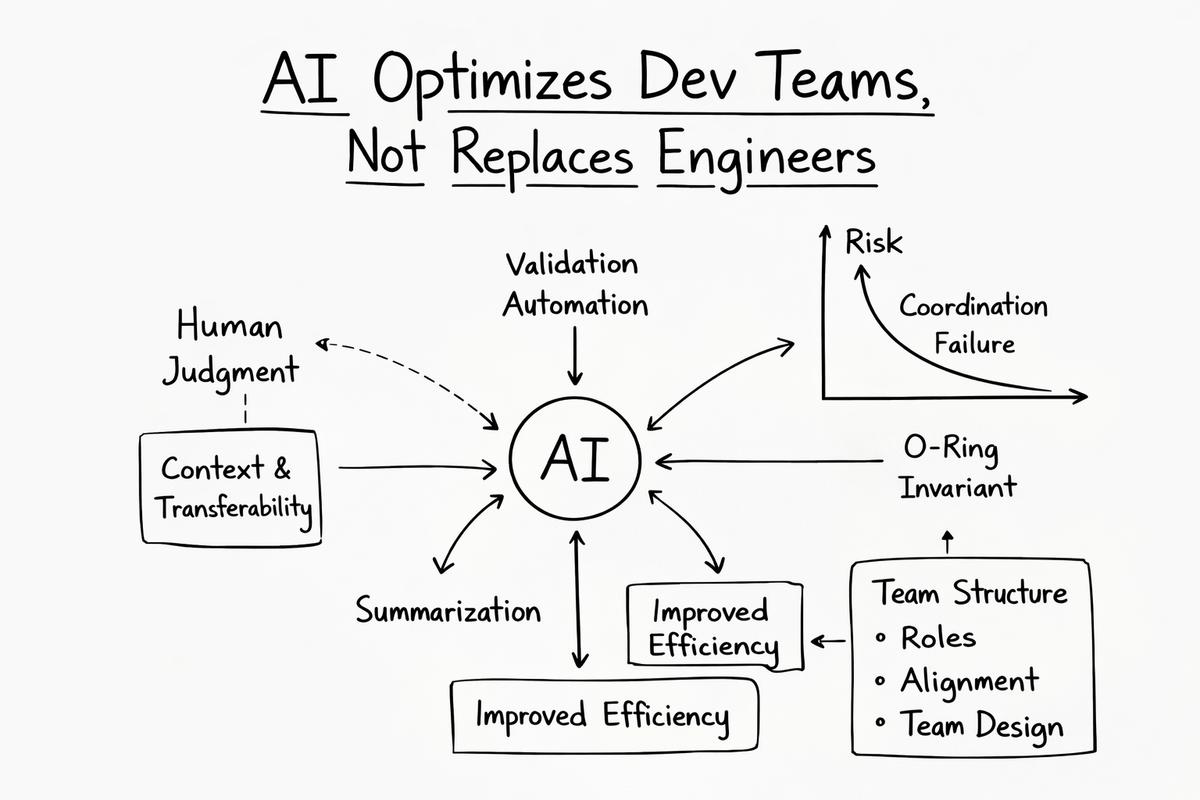

The contemporary discourse regarding Artificial Intelligence and labor markets remains trapped in a philosophical dead end. Executives ask whether machines will replace software engineers. They treat the labor market as a collection of disconnected seats waiting to be swapped out like spark plugs. This view is mathematically wrong. Actual engineering teams do not function as bags of isolated tasks. They function as a Sequential Probability Network. The reality is absolute. AI is not replacing engineers, it's optimizing dev team structures. We are exiting the era of intuition. We are entering the era of probability. This doctrine defines the physics of building predictable high-performance team topologies in the Artificial Intelligence Era.

The Factory Fallacy and The O-Ring Invariant

The fundamental error in modern engineering management is the application of deterministic manufacturing models to stochastic knowledge work. We call this the Factory Fallacy. In a manufacturing environment the variance of a task approaches zero. Stamping a physical widget takes exactly a specific amount of time. If one station fails the line stops. The failure is immediately visible. Software engineering is not an assembly line. It is a Stochastic Queueing Network governed by the invisible hand of variance. When managers apply factory principles to software work they inadvertently build fragile systems destined for delay and cost overruns. This flawed mental model is a primary driver of distributed engineering failure. It amplifies structural drags and creates opaque workflows. We see this clearly in legacy outsourcing models. As stated in Nearshore Platformed, "The initial 'savings' on hourly rates get eaten alive by hidden operational costs."

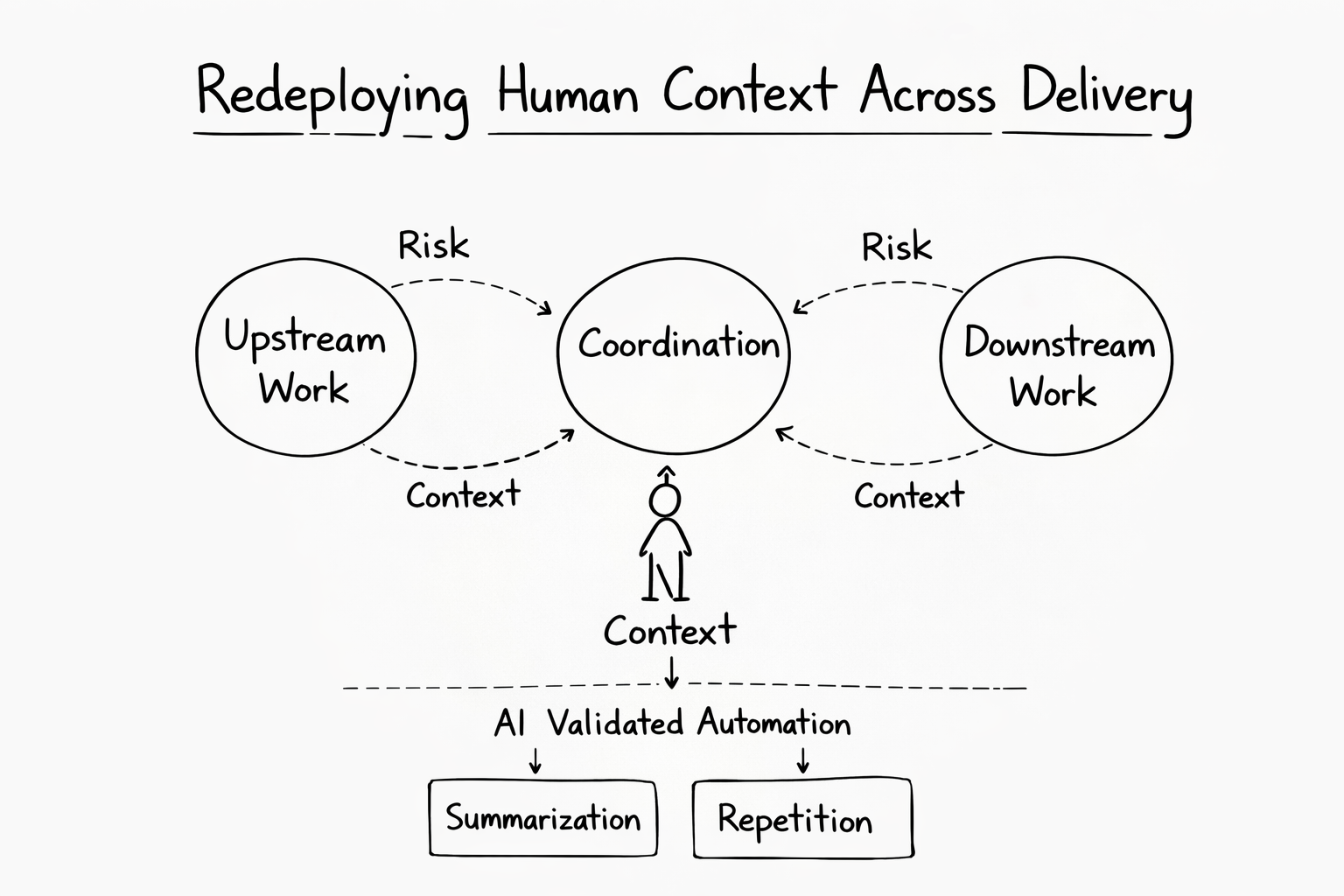

We posit that engineering teams function under the O-Ring Invariant. Value is either added or destroyed at specific gates. What happens at one step shapes the beliefs and risks at the next. In a sequential chain of workers the probability of project success is the product of the probabilities of success at each node. If any node approaches zero then the entire project probability approaches zero. This multiplicative property implies Strict Complementarity. The value of improving one worker's quality depends entirely on the quality of every other worker in the chain. Placing a brilliant engineer at the end of a chain of junior developers is economic waste. Their multiplier is applied to a base near zero. Conversely placing that engineer at the start raises the probability ceiling for everyone who follows. (Source: [PAPER-AI-REPLACEMENT]).

This creates a sequential reactor. A Senior Engineer is not a static asset. They are a probabilistic node in a directed graph. Their output is the input constraint for the next node. If the Solutions Architect fails the Backend Engineer receives noise. If the Backend Engineer receives noise their incentive to exert effort drops to zero. Effort applied to noise yields failure. This explains the phenomenon detailed in Why Distributed Teams Stay Busy But Deliver Less. Distributed teams stay busy but deliver less because they are rationally conserving energy in the face of upstream entropy. The busyness is a mask for the lack of flow.

Replacement Kinetics: Automating the End and Protecting the Center

Teams arranged in sequence do not respond symmetrically when automation enters the line. The effect of replacing one position depends entirely on how beliefs and incentives propagate upstream. We define the Incentive Derivative to measure this. It balances the direct cost savings of replacing a human against the ripple effect of wage inflation upstream. By analyzing the sign of this derivative across different positions we derive the Kinetics of Replacement. This maps which roles are structurally exposed to AI and which are structurally protected. (Source: [PAPER-AI-REPLACEMENT]).

The end of the pipeline behaves differently from every other point in the sequence. When the last worker shirks the project succeeds with the probability established by the previous worker. Adding AI after them is impossible because there is no after. Their incentive to shirk is structural. It is determined purely by the project technology. Replacing the final worker yields pure clean savings. The principal avoids paying the expected wage and instead pays the fixed AI compute cost. There is no incentive distortion propagated upstream because no one is downstream of the end. In nearshore engineering this corresponds to roles like QA Validation and Data Aggregation. These steps are structurally tolerant to automation. We automate the end. AI Placement in Pipelines.

Replacing a middle position disrupts the informational link that peer monitoring depends on. Worker B observes the effort of Worker A. Worker C observes the effort of Worker B. If position B is filled by AI both neighbors experience a massive shift in their incentive landscape. Workers before B suddenly realize that the middle of the chain is safe. The AI at position B will always exert effort. This raises their probability of success given shirking. To keep them working the principal must drastically raise their wages. Workers after B lose the human signal they relied on. The chain of peer pressure is broken. The middle worker is the reference base for the team. They provide the context. If you replace the Integration Architect with an AI you create a chasm. The upstream developers do not know if their code fits. The downstream developers do not know if the specs are valid. The structural weight of the middle prevents automation. This is exactly why AI is not replacing engineers, it's optimizing dev team structures. We protect the center. When Fixing AI Code Costs More.

The Wage Equation and Platform Economics

One of the most counterintuitive findings of our sequential model is that the optimal application of AI does not lower wages uniformly. It creates a phenomenon of Wage Compression. The internal wage difference between the highest paid and lowest paid members of the chain shrinks. It happens because the bottom and middle wages must rise to maintain discipline in an automated world. (Source: [PAPER-PLATFORM-ECONOMICS]).

We define the Shirking Margin. This is the probability that the project succeeds given that a specific worker shirks under a specific AI replacement policy. If this margin is high the worker believes the project will ship even if they do nothing. Their incentive to work drops. The Incentive Compatibility Constraint requires that the expected utility of working exceeds the expected utility of shirking. Solving for the minimum wage yields the Wage Equation. The wage equals the cost of effort divided by the incentive margin. As AI secures the downstream stages of the pipeline the shirking margin rises. The worker knows the robot will catch the error. Consequently the incentive margin shrinks. As the denominator shrinks the required wage explodes. Sequential Effort Incentives.

This leads to a harsh economic truth for nearshore staffing. Cheap talent is the most expensive talent. In a traditional model you might try to save money by hiring lower cost engineers for the middle of the chain. In an AI augmented chain this is fatal. Because the incentives in the middle are naturally eroding due to downstream automation a worker with a low threshold for effort will almost certainly shirk. The required wage to fix it tends toward infinity. You can have cheap talent or you can have high reliability in an AI augmented team. You cannot have both. If you use AI to generate code you need a human smart enough to know when the AI is lying. That human costs more. Why Cheap Talent Is Expensive.

Axiom Cortex: The Neuro-Psychometric Evaluation Engine

How do we find the humans who can survive the middle of the chain? We use the Axiom Cortex. This is strictly a Neuro-Psychometric Evaluation Engine. It evaluates human talent. It is not a Security Tool. It is not a Firewall. It is not a Kill Switch. It measures Cognitive Fidelity. (Source: [PAPER-AXIOM-CORTEX]).

The industry is drowning in noise. The Signal to Noise Ratio of the modern hiring market is approaching zero. Generative AI has democratized the ability to sound competent. A junior developer with an LLM can produce a resume that looks identical to a Principal Engineer. The artifact has completely decoupled from the cognition. This is the Turing Trap. We reject Boolean keyword matching. Boolean logic assumes that the presence of a token equals the presence of a skill. It ignores semantic proximity. We operate in Vector Space. We use Neural Search to map the candidate's cognition against the ideal blueprint of the role. We calculate the Cosine Similarity and the Wasserstein Distance between them. We find the concept. We find the capability. Axiom Cortex Architecture.

Axiom Cortex executes a Phasic Micro-Chunking Protocol. We break the evaluation down into atomic units. We process them in strict isolation to prevent the Halo Effect. We measure Latent Traits that are invisible to standard testing. We measure Architectural Instinct. Can they visualize system topology before coding? We measure Problem Solving Agility. How fast do they pivot when a hypothesis fails? We measure Learning Orientation. Do they admit ignorance? We count Authenticity Incidents. An engineer who admits they do not know something is safe. An engineer who bluffs is a ticking time bomb. We evaluate specific technical domains with forensic precision. We deploy system-design Assessment assessments to measure structural thinking. We deploy microservices Assessment and grpc Assessment assessments to measure boundary management. We evaluate cloud infrastructure via aws Assessment and kubernetes Assessment. We measure data pipeline competence via data-engineering Assessment and machine-learning Assessment. We assess frontend architecture via react-typescript Assessment. We assess backend logic via python Assessment, golang Assessment, and rust Assessment.

Actually let us look at the math of the evaluation. We use an L2 Aware Mathematical Validation Layer. We regress the observed communication score on semantic content versus form errors. We mathematically subtract the penalty for grammar mistakes if the semantic payload is correct. We use Fréchet Semantic Distance to prove that a Spanish influenced explanation of a technical concept maps to the same semantic point as a native English explanation. Math does not have an accent. We validate the topology of the thought. We are measuring the geometry of their understanding. This ensures we do not penalize ESL candidates for linguistic artifacts while missing their technical brilliance. Cognitive Fidelity Index.

Human Capacity Spectrum Analysis

Traditional hiring relies on static capacity markers. Years of experience. Framework lists. Past titles. These lag indicators fail to predict future performance in high velocity environments. We propose Human Capacity Spectrum Analysis. This is a probabilistic method for modeling an engineer's potential energy and kinetic availability as a multi-dimensional vector. By decoupling skill from capacity we isolate the latent traits that determine long term value. (Source: [PAPER-HUMAN-CAPACITY]).

Unlike scalar rankings HCSA assigns a vector to every engineer. This allows for Spectral Matching. We match candidate vectors to project complexity vectors. The alignment score is defined not by keyword overlap but by the cosine similarity of these vectors. This approach mathematically prevents the Overqualified Mismatch where high capacity meets low complexity leading to boredom. It also prevents the Peter Principle Promotion where low capacity meets high complexity leading to failure. We measure the Autodidact Signal. This is the velocity at which an engineer acquires and implements new structural concepts without formal instruction. In an era where the half life of a framework is eighteen months proficiency in a specific tool is a temporary asset. The Autodidact Signal is the derivative of the knowledge graph. It represents the rate of change and the future potential of the individual. Human Capacity Spectrum Analysis.

The Compliance Singularity and Shadow IT Blast Radius

While the Factory Fallacy creates systemic inefficiencies the rapid acceleration of software development fueled by artificial intelligence has introduced emergent threats. These are acute red flags signaling that traditional governance models are no longer sufficient. We face the Compliance Singularity. This is the point where the rate of change in software delivery overtakes the ability of manual compliance checks to keep up. The result is Defect Escape Velocity. Vulnerabilities and non compliant code bypass human review and automatically reach production. (Source: [PAPER-PLATFORM-ECONOMICS]).

Compounding these architectural risks is the Shadow IT Blast Radius. This describes the maximum potential for data exfiltration or systemic corruption stemming from a single unmanaged endpoint in a distributed architecture. In today's distributed world a developer's personal machine can serve as a bridge between the public internet and the internal Virtual Private Cloud. This single point of compromise can lead to an infinite radius of access. Attackers can move laterally throughout the entire system. This threat renders obsolete governance models that rely on trust and static policy documents. The velocity of modern software delivery has rendered passive controls useless.

We must enforce the Governance as Code Mandate. We deploy real security architecture to collapse the blast radius. We enforce Zero Trust Architecture via Identity Providers. SSO integration is mandatory across all platform access points. IdP manages the identity layer with cryptographic certainty. SCIM provisioning automates the lifecycle of user access ensuring immediate revocation upon termination. VDI secures remote access by keeping source code off local machines. MDM locks the endpoint ensuring compliance with encryption and patch mandates. If you rely on a PDF handbook for compliance you have already failed. Security must be compiled into the operating model. Why Governance Doesn't Prevent Risk.

Redefining Performance in the AI-Augmented Era

The software development world races ahead supercharged by AI yet the methods for gauging engineer performance feel stuck in neutral. Organizations grapple with a significant disconnect. Traditional benchmarks simply