The Polyglot Persistence Fallacy: Stack Dilution Risks in Distributed Architectures

Why polyglot persistence weakens engineering teams. Learn how stack dilution destroys data integrity and how CTOs fix it with specialization.

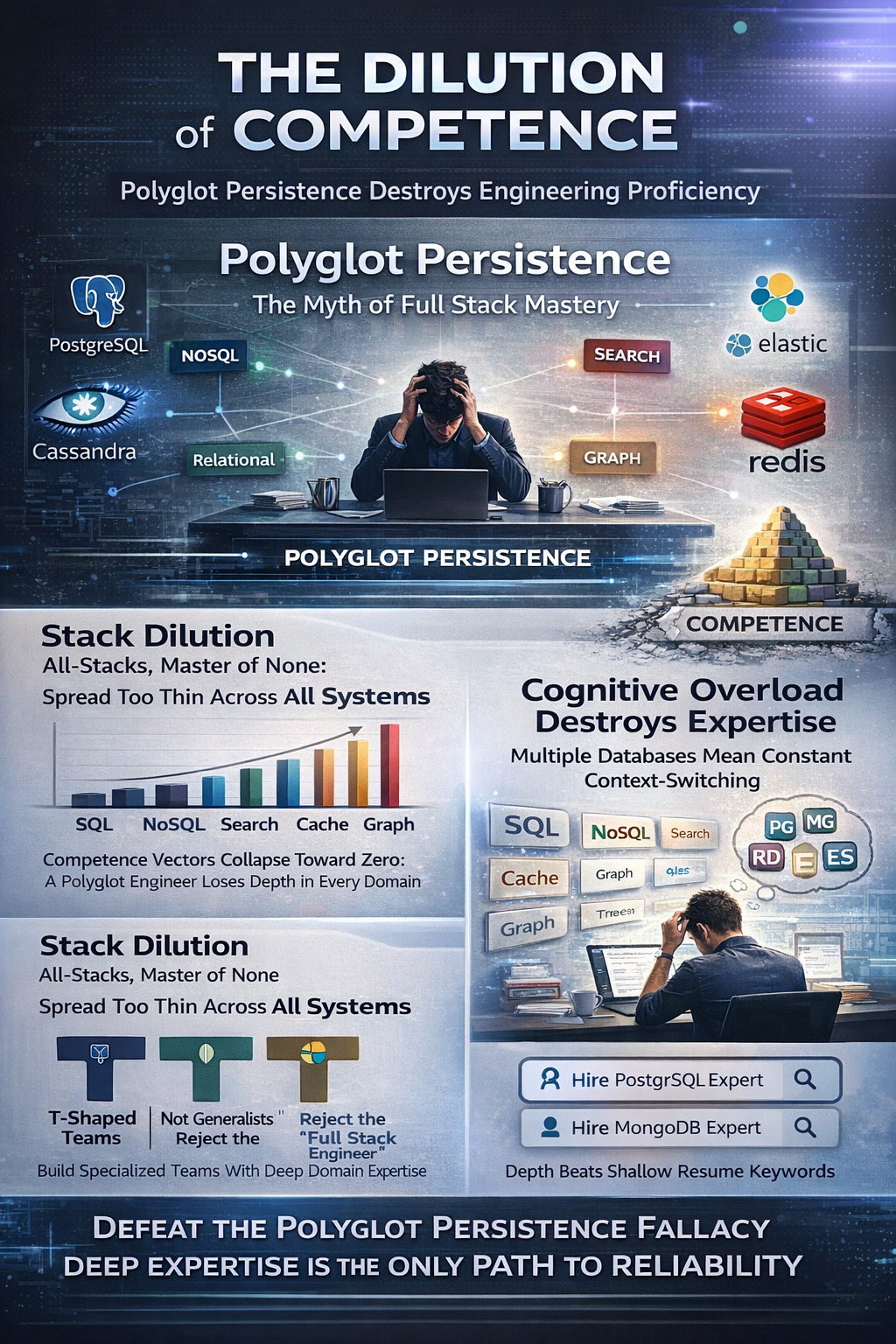

Executive Summary: The Dilution of Competence

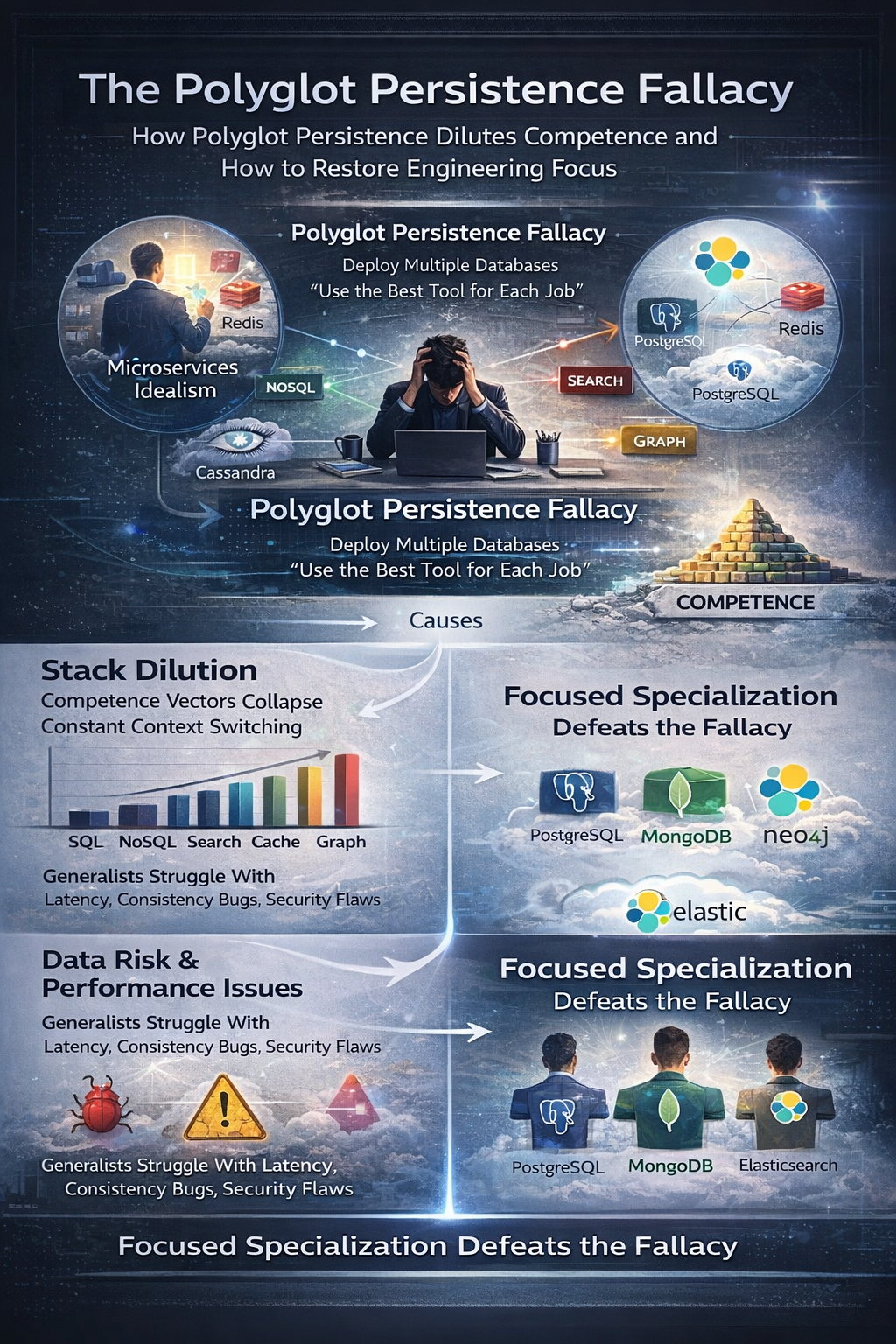

The modern distributed architecture is a lie. We tell ourselves that we are building "Microservices." We tell ourselves that we are using "The Right Tool for the Job." We deploy Redis for caching. We deploy Cassandra for wide-column writes. We deploy Elasticsearch for search. We deploy Neo4j for graphs. We call this Polyglot Persistence. It sounds sophisticated. It sounds architectural.

It is actually an operational suicide pact.

The Polyglot Persistence Fallacy is the dangerous assumption that a single engineering team can maintain high Cognitive Fidelity across multiple, distinct data storage paradigms simultaneously. It assumes that the cognitive load required to operate a relational database is fungible with the cognitive load required to operate a graph database. This is mathematically false.

When you demand that an engineer master five different persistence layers, you do not get five times the capability. You get Stack Dilution. The vector of their competence shortens. The magnitude of their expertise in any single domain collapses toward zero. You create a team of "Generalists" who can write a "Hello World" in five languages but cannot tune a garbage collection pause in any of them.

This article dissects the physics of this failure. We utilize the Human Capacity Spectrum Analysis Human Capacity Spectrum Analysis to prove that talent is a vector with finite magnitude. We explore the Axiom Cortex Axiom Cortex Architecture data on latent trait inference. We demonstrate why the "Full Stack Engineer" is a myth that destroys data integrity Why The Full Stack Engineer Is Bad At Everything. We provide the architectural doctrine required to survive the data layer.

1. The Vector Space of Competence

1.1. Finite Magnitude in Cognitive Vectors

Engineering talent is not infinite. It is constrained by the laws of physics and time. We model an engineer’s capacity not as a list of keywords, but as a vector in a high-dimensional space. The magnitude of this vector ($|C|$) represents their total cognitive capacity. The direction represents their specialization.

When a hiring manager demands a "Polyglot Engineer," they are asking for a vector that points in all directions simultaneously. Vector mathematics dictates the result. The magnitude in any specific direction ($C_x, C_y, C_z$) must decrease as the number of dimensions increases.

$$ |C|^2 = C_{sql}^2 + C_{nosql}^2 + C_{graph}^2 + C_{cache}^2 $$

If $|C|$ is fixed (and it is, by the limits of human neurobiology), then increasing the number of terms on the right side of the equation mathematically necessitates a reduction in the value of each individual term.

This is Stack Dilution.

A candidate who claims expertise in postgresql Assessment, mongodb Assessment, redis Assessment, neo4j Assessment, and cassandra Assessment is lying. They are not lying maliciously. They are lying statistically. They possess "Tutorial Knowledge." They know the syntax. They do not know the failure modes. They do not know the edge cases of the replication protocol under network partition.

1.2. The Resume Fallacy

We see this daily in the Axiom Cortex evaluations. Resumes are stuffed with keywords. Candidates list every database invented since 1990. This is the Resume Fallacy Why Resumes Don't Translate To Results.

The industry incentivizes this dishonesty. Applicant Tracking Systems (ATS) use Boolean logic. They scan for keywords. If the resume lacks "Cassandra," it is rejected. So candidates add "Cassandra." They watched a YouTube video on it once. Now they are "Experts."

This creates a Signal-to-Noise Ratio of zero. The hiring manager sees a "Perfect Match." The Axiom Cortex sees a "Paper Tiger."

As stated in Nearshore Platformed Nearshore Platformed, "The single biggest failure of traditional recruitment technology lies in its reliance on simple keyword matching. A system sees 'Java' in a job description and 'Java' on a resume and declares a potential match." This is insufficient. We must measure the Semantic Depth of that match. Does the candidate understand the B-Tree index structure? Or do they just know how to write a SELECT statement?

2. The Database Dilemma: Specificity vs. Generalization

2.1. The Relational vs. NoSQL Schism

The cognitive models required for different persistence layers are antagonistic. They conflict.

Relational Systems (postgresql Assessment, mysql Assessment):

These require a mental model based on ACID (Atomicity, Consistency, Isolation, Durability). The engineer must think in terms of normalized data structures. They must understand joins. They must understand lock contention. They must prioritize consistency over availability (CP systems).

NoSQL Systems (mongodb Assessment, cassandra Assessment):

These require a mental model based on BASE (Basically Available, Soft state, Eventual consistency). The engineer must think in terms of denormalized aggregates. They must understand sharding. They must understand read repair. They must prioritize availability over consistency (AP systems).

When you ask one engineer to manage both, you force them to Context Switch their fundamental understanding of data physics. This introduces Cognitive Dissonance.

An engineer trained in Postgres will try to normalize data in Cassandra. This destroys read performance. An engineer trained in Mongo will try to denormalize data in Postgres. This destroys write performance.

The Polyglot Persistence Fallacy assumes these paradigms are compatible. They are not. They are orthogonal.

2.2. The Graph and Search Complexity

Add Graph Databases (neo4j Assessment) and Search Engines (elasticsearch Assessment) to the mix. Now the complexity scales quadratically.

Graph theory requires thinking in nodes and edges. It requires understanding traversal algorithms ($O(log n)$ vs $O(n)$). Search engines require understanding inverted indices, tokenization, and relevance scoring.

A "Full Stack" engineer cannot master all of these. They will inevitably default to the "Lowest Common Denominator." They will treat Elasticsearch like a key-value store. They will treat Neo4j like a relational table. They will build a system that is functionally incorrect because they lack the Architectural Instinct to respect the grain of the tool.

This is why Full Stack Engineers are bad at everything Why The Full Stack Engineer Is Bad At Everything. They are spread too thin. They are the "Jack of all trades, master of downtime."

3. Measuring Fidelity: The Axiom Cortex Protocol

3.1. Latent Trait Inference

We do not trust the resume. We trust the Latent Trait Inference Engine (LTIE).

The Axiom Cortex Axiom Cortex Architecture evaluates candidates based on four dimensions:

- Architectural Instinct (AI): Can they visualize the system topology?

- Problem-Solving Agility (PSA): Can they pivot when constraints change?

- Learning Orientation (LO): Do they admit ignorance?

- Collaborative Mindset (CM): Do they frame work in a team context?

For persistence roles, Architectural Instinct is the critical discriminator. We test this by stripping away the syntax. We do not ask "How do you create a table in DynamoDB?" We ask "Why would you choose DynamoDB over RDS for a high-write, low-latency ledger system? What are the trade-offs in consistency?"

A candidate suffering from Stack Dilution will answer with buzzwords. "Dynamo is web scale." "Mongo is flexible."

A candidate with high Cognitive Fidelity Cognitive Fidelity Index will answer with physics. "Dynamo allows for single-digit millisecond latency at scale, but we sacrifice complex querying. We need to design the partition keys based on access patterns to avoid hot partitions. If we need ACID transactions across items, we incur a performance penalty."

3.2. The Metacognitive Conviction Index

We measure the Metacognitive Conviction Index (MCI). This is the calibration between confidence and accuracy.

A "Polyglot" candidate often displays Low Accuracy / High Confidence. They are dangerous. They believe they know Redis because they used it for session storage once. They will deploy redis Assessment as a primary persistent store without understanding AOF/RDB persistence trade-offs. They will lose data.

We look for High Accuracy / High Confidence in specific domains, and Honest Ignorance in others. A Senior Postgres Engineer should say "I am an expert in Postgres vacuum tuning. I have limited experience with Cassandra compaction strategies."

This honesty is a signal of Seniority. It is a signal of Fidelity.

4. The Economic Impact of Shallow Knowledge

4.1. The Cost of Data Corruption

Data is the only asset that is irreplaceable. Code can be rewritten. Servers can be redeployed. Data, once corrupted, is gone.

The economic cost of Stack Dilution manifests in Data Corruption. An engineer who does not understand the isolation levels of sql-server Assessment will introduce race conditions. They will create "Phantom Reads." They will double-charge customers.

The cost of fixing a data corruption bug in production is 1000x the cost of fixing it in design. This is the Defect Amplification Model.

4.2. The Operational Overhead

Polyglot Persistence increases Operational Overhead. Each new database requires:

- Backup strategies.

- Monitoring pipelines.

- Security patches.

- Upgrade paths.

- Disaster recovery drills.

If your team is diluted, they cannot maintain this operational rigor. They will skip the backups for the "minor" database. They will ignore the security patches for the "internal" cache.

This creates Operational Debt. It accumulates compound interest. Eventually, the system collapses.

As noted in Nearshore Platformed Nearshore Platformed, "The initial 'savings' on hourly rates get eaten alive by hidden operational costs." Hiring a cheap, generalist team to manage a complex polyglot stack is false economy. You save on the hourly rate. You pay in the outage.

5. Strategic Remediation: The T-Shaped Team

5.1. Rejecting the Generalist

We must stop hiring for "Generalists." We must hire for T-Shaped profiles.

A T-Shaped engineer has broad knowledge of software engineering principles (the horizontal bar) and deep, vertical expertise in one or two specific persistence technologies (the vertical bar).

We build teams by combining complementary T-Shaped vectors.

- Engineer A: Deep Expert in postgresql Assessment and timescaledb Assessment.

- Engineer B: Deep Expert in mongodb Assessment and redis Assessment.

- Engineer C: Deep Expert in elasticsearch Assessment and Data Pipelines.

Together, they form a Polyglot Team. Individually, they remain Specialists.

This respects the Cognitive Load limit. No single human is responsible for mastering the entire universe of data storage.

5.2. The Platform Strategy

We leverage Platform Economics Nearshore Platform Economics. We do not build everything from scratch. We use managed services where possible to offload the operational burden.

But managed services do not replace the need for architectural understanding. AWS RDS manages the server. It does not manage the schema. It does not manage the query performance. You still need the expert.

We use the TeamStation AI platform to find these experts. We use Sirius AI to scan for the specific vector magnitude. We do not look for "Database Developers." We look for "Postgres Internals Experts." We look for "Cassandra Data Modelers."

5.3. Hiring for the Data Layer

When hiring for the persistence layer, specificity is the only metric that matters.

- For Relational: Hire for , hire mysql developers, hire sql-server developers.

- For NoSQL: Hire for hire cassandra developers, , hire dynamodb developers.

- For Search: Hire for hire elasticsearch developers.

- For Caching: Hire for hire redis developers.

Do not combine these into a single requisition. Do not ask for a "Full Stack Developer with experience in Postgres, Mongo, Redis, and Elastic." You will get a liar. You will get a diluted stack.

6. The Latency Horizon

6.1. Physics of Distributed Data

The ultimate constraint is the speed of light. In a distributed system, latency is the governing law.

Polyglot Persistence often introduces Network Latency. Data must move between the SQL store, the NoSQL store, and the Search index. This movement takes time. It introduces Eventual Consistency lag.

An engineer with Stack Dilution does not understand this. They treat the network as reliable. They treat latency as zero. They build systems that are "Correct" in the unit test but "Broken" in the real world.

We need engineers who understand the Latency Horizon. Who understand that data has mass. Who understand that moving data costs energy and time.

This is why we test for System Design system-design Assessment. We force candidates to draw the boxes. We force them to calculate the latency budget.

7. Conclusion: The Return to Rigor

The era of the "Rockstar Generalist" is over. The complexity of modern systems demands a return to rigor. It demands a return to specialization.

The Polyglot Persistence Fallacy is a trap. It seduces CTOs with the promise of flexibility. It delivers the reality of fragility.

We must build teams that respect the limits of human cognition. We must build teams that value depth over breadth. We must build teams that understand the physics of data.

We do not hire resumes. We hire vectors. We hire magnitude. We hire fidelity.

This is the TeamStation AI doctrine. This is how we engineer the future.

References & Further Reading

- Core Doctrine: Nearshore Platformed "Nearshore Platformed: AI and Industry Transformation"

- Talent Analysis: Human Capacity Spectrum Analysis "Human Capacity Spectrum Analysis"

- Evaluation Engine: Axiom Cortex Architecture "Axiom Cortex Architecture"

- Economic Theory: Nearshore Platform Economics "Nearshore Platform Economics"

- Hiring Risks: Why The Full Stack Engineer Is Bad At Everything "Why The Full Stack Engineer Is Bad At Everything"

- Hiring Risks: Why Resumes Don't Translate To Results "Why Resumes Don't Translate To Results"

- Hiring Risks: Why Are Seniors Failing Junior Tasks "Why Are Seniors Failing Junior Tasks"

Technical Assessment Hubs

- Relational: postgresql Assessment, mysql Assessment, sql-server Assessment, oracle-database Assessment

- NoSQL: mongodb Assessment, cassandra Assessment, , cockroachdb Assessment

- Graph & Search: neo4j Assessment, elasticsearch Assessment

- Caching: redis Assessment

- Data Engineering: data-engineering Assessment, etl-elt Assessment, snowflake Assessment

Hiring Resources

- hire data-engineering developers Hire Data Engineers

- Hire Backend Developers

- hire devops-engineering developers Hire DevOps Engineers

- Hire Talent Start the Hiring Process