Team Topologies in the Agentic Workflow Era Beyond 2026

Sequential probability networks replace org charts. Learn how AI agents, telemetry, and T-shaped teams redefine engineering performance beyond 2026.

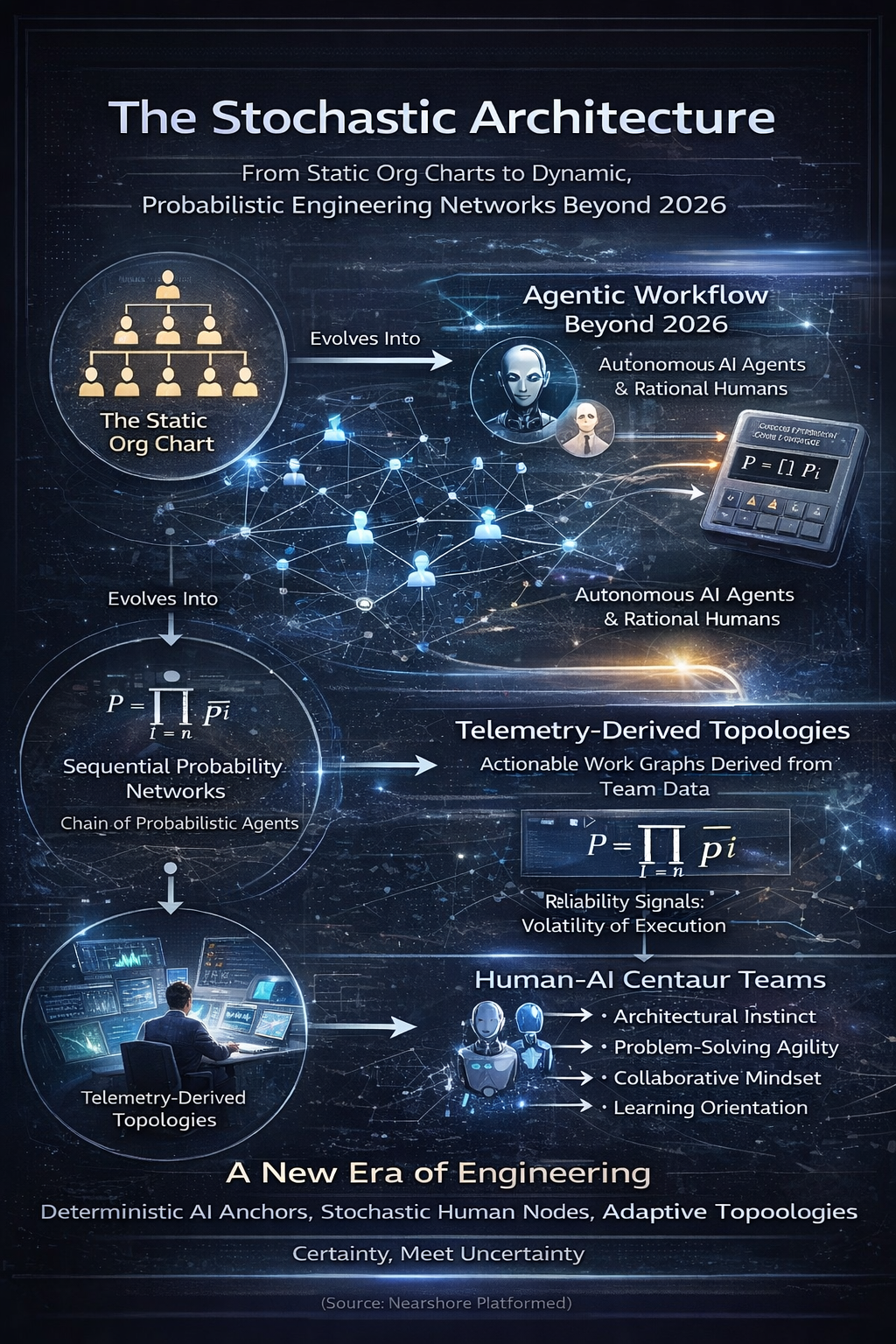

The Stochastic Architecture

1. The Death of the Static Org Chart

The industry operates under a collective hallucination. We visualize engineering organizations as hierarchies, neat pyramids of authority and responsibility defined by job titles. This is a comforting fiction. It is also mathematically false. In the reality of high-velocity software delivery, an engineering organization is not a tree. It is a Sequential Probability Network.

We are exiting the era of intuition. We are entering the era of probability. As we approach the Agentic Workflow era beyond 2026, the fundamental physics of how software is built has shifted. The integration of autonomous AI agents into human teams does not merely add capacity. It alters the propagation of variance across the entire graph.

Traditional management theory treats a team as a collection of isolated tasks. If you have ten engineers and add an eleventh, you expect a linear increase in output. This is the "Factory Fallacy." In a sequential chain of dependencies, the probability of project success ($P$) is the product of the probabilities of success at each node ($p_i$).

$$P = \prod_{i=1}^{n} p_i$$

This is the O-Ring Invariant. If a single node in the chain introduces noise or failure, the probability of the entire system's success collapses toward zero. A "Senior Engineer" is not a static asset. They are a probabilistic node. Their output is the input constraint for the next node. If the Architect fails, the Backend Engineer receives noise. Effort applied to noise yields failure.

In the context of Team Topologies in the Agentic Workflow era beyond 2026, we must stop designing for headcount. We must design for probability propagation.

(Source: Nearshore Platformed)

2. The Physics of Sequential Probability

The introduction of Artificial Intelligence into this sequential chain creates a new dynamic. We view AI not as a "super-worker" but as a Deterministic Variance Reducer. A human worker has high variance ($\sigma^2 > 0$). An AI agent, properly constrained, has near-zero variance ($\sigma^2 \approx 0$).

However, placing these deterministic nodes requires rigorous architectural discipline. You cannot simply sprinkle AI into a team and expect velocity. You must respect the Incentive Compatibility Constraint.

The Zeta ($\zeta$) Variable and Incentive Collapse

We model the team as a set of rational agents. Each human worker must choose between exerting effort or shirking. Effort is expensive. Shirking is free. The decision depends on the worker's belief that their effort will matter.

We define the variable $\zeta$ (Zeta) as the probability that the project succeeds even if the worker shirks. As AI agents secure downstream stages of the pipeline, $\zeta$ rises. The upstream human realizes that the robot will catch the error. The safety net becomes a hammock. The incentive to exert effort drops to zero.

This leads to a counter-intuitive conclusion regarding Team Topologies in the Agentic Workflow era beyond 2026. Making the downstream system more reliable increases the cost of motivating upstream humans.

The Structural Protection of the Middle

Research indicates that the center of the pipeline is structurally protected. Replacing a middle position with an AI agent disrupts the informational link that peer monitoring depends on. Upstream workers face a higher $\zeta$. Downstream workers lose the human signal they relied on to update expectations.

Therefore, the optimal topology for the Agentic Era follows a specific pattern:

- Automate the End: The final stage (QA, validation, documentation) has flat incentives. Replacing these roles with AI yields pure savings without incentive distortion.

- Protect the Center: Architecture, integration, and complex logic require human cognition to maintain the "O-Ring" pressure on the rest of the chain.

- Support the Start: The initialization phase benefits from AI scaffolding but requires human intent.

(Source: [PAPER-AI-REPLACEMENT])

3. Telemetry-Derived Topology: Recovering the Graph

We must abandon the practice of designing teams based on opinions or "Spotify Model" copy-pasting. True topology is discovered through work telemetry. We recover the real structure of the organization by analyzing the communication and code-contribution graph.

We utilize specific signals to define the health of the topology.

Throughput ($T$) and Reliability ($R$)

Throughput is not lines of code. It is completed, accepted movement. Reliability is the consistency of that execution.

$$R = 1. CV(v)$$

Where $CV$ is the Coefficient of Variation. A high-throughput node with low reliability is an Entropy Injector. They create motion but destroy value. In a distributed nearshore environment, these nodes are catastrophic. They amplify the "Bullwhip Effect" of variance across time zones.

Context Surface Area ($C$)

We measure the cognitive load on a team member using Context Surface Area.

$$C = repos \times \sqrt{collaborators} \times (1 + cross_team_fraction)$$

This metric exposes the hidden cost of coordination. A developer touching 10 repositories and coordinating with 5 teams is not "productive." They are an Overloaded Integrator. They are a single point of failure waiting to happen.

When designing Team Topologies in the Agentic Workflow era beyond 2026, we optimize to minimize $C$. We use the Inverse Conway Maneuver. We design the organization to match the desired decoupled architecture. We enforce the "Two-Pizza Rule" not as a management slogan but as a mechanism to cap Context Surface Area.

(Source: [PAPER-PERF-FRAMEWORK])

4. The Axiom Cortex: Cognitive Alignment for the Graph

Building a resilient topology requires more than just filling seats. It requires aligning human cognition with the complexity of the distributed system. A mismatch here leads to "Cognitive Fidelity Decay," where the engineer's mental model diverges from the actual system state.

We utilize the Axiom Cortex, a Neuro-Psychometric Evaluation Engine, to measure the latent traits required for specific nodes in the topology. This is NOT a background check or a code scanning tool. It is a scientific instrument for measuring potential energy.

The Human Capacity Spectrum Analysis (HCSA)

We map every engineer onto a four-dimensional vector:

- Architectural Instinct (AI): The ability to visualize system topology.

- Problem-Solving Agility (PSA): The velocity of traversing the solution space.

- Learning Orientation (LO): The derivative of skill acquisition.

- Collaborative Mindset (CM): The efficiency of information transfer.

(Source: [PAPER-HUMAN-CAPACITY])

For a "Graph Glue" node—someone who connects disparate subsystems—we prioritize high Collaborative Mindset and Architectural Instinct. We might validate this profile using the system-design Assessment assessment or the microservices Assessment evaluation.

For a "Reliability Anchor" node—someone who stabilizes the core—we prioritize Problem-Solving Agility and deep technical proficiency. We validate this using specific stack assessments, such as java Assessment or python Assessment.

Phasic Micro-Chunking

The Axiom Cortex employs Phasic Micro-Chunking to eliminate the hallucination and bias inherent in traditional interviewing. We do not rely on "vibes." We rely on the Axiom Cortex Architecture methodology. This ensures that the nodes we inject into the probability network have a $p_i$ close to 1.0.

(Source: [PAPER-AXIOM-CORTEX])

5. The Economic Physics: Kingman's Limit and TCO

The economics of Team Topologies in the Agentic Workflow era beyond 2026 are governed by Queueing Theory, specifically Kingman's Limit.

$$E[W_q] \approx \left( \frac{\rho}{1-\rho} \right) \left( \frac{C_a^2 + C_s^2}{2} \right) \tau$$

The first term, $\frac{\rho}{1-\rho}$, dictates that as utilization ($\rho$) approaches 100%, wait time approaches infinity. A team that is "fully booked" is mathematically guaranteed to produce infinite delay.

We explicitly reject the goal of 100% resource utilization. We optimize for Slack. Slack allows the system to absorb the variance introduced by complex integration tasks.

The Cost of Coordination

Adding nodes to a graph increases the number of potential connections quadratically ($N(N-1)/2$). This is the "Coordination Tax." When hiring for a specific role, such as hire react developers or hire node developers, we must calculate the Net Topology Value.

$$NTV = \Delta THS + \Delta P_{success}. \Delta Cost_{annualized}$$

If the coordination tax exceeds the throughput gain, the hire destroys value. This is why "cheap talent" is often the most expensive. A low-capacity engineer increases variance ($C_s^2$) and coordination overhead, driving the system into a high-latency state.

(Source: [PAPER-PLATFORM-ECONOMICS])

6. Distributed Physics and Country Hubs

In a distributed topology, latency is quantized by the rotation of the Earth. A "quick question" to a developer in a time zone 12 hours away incurs a 24-hour synchronization penalty.

We mitigate this by leveraging Nearshore delivery hubs that align with US time zones. This reduces the Synchronization Penalty ($S_p$) and allows for real-time collaboration, which is essential for maintaining the "O-Ring" pressure in the middle of the pipeline.

The Hub Strategy

We deploy specific topologies into specific regions based on talent density and domain expertise.

- Brazil: A powerhouse for data engineering and Java. We often deploy java developers in brazil nodes or python developers in brazil clusters for heavy backend lifting.

- Mexico: High density of frontend and full-stack talent. Ideal for react developers in mexico and node developers in mexico deployments that require tight coupling with US product teams.

- Colombia: A balanced hub for enterprise technologies. We utilize net developers in colombia and angular developers in colombia for legacy modernization and enterprise integration.

- Argentina: Known for strong creative and architectural talent. A prime location for go developers in argentina and nextjs developers in argentina roles.

We do not treat these locations as interchangeable. We treat them as specialized nodes in the global graph.

7. Security Architecture in the Agentic Era

As we integrate autonomous agents and distributed human nodes, the security perimeter dissolves. We cannot rely on firewalls. We must rely on Identity.

We enforce a Zero Trust architecture. Every human and agent node must be authenticated via a centralized Identity Provider (IdP) using SSO and SCIM provisioning. We utilize security-engineering Assessment evaluations to ensure that the human nodes understand this paradigm.

We deploy secure virtual desktop infrastructure (VDI) or strictly managed devices (MDM) to ensure that code never leaves the secure enclave. This is not just compliance. It is the definition of the boundary condition for the topology.

(Source: Nearshore Platformed)

8. Conclusion: The Centaur Model

The future of Team Topologies in the Agentic Workflow era beyond 2026 is not about replacing humans. It is about the Centaur Model. We combine the deterministic variance reduction of AI agents with the high-context, high-fidelity cognition of human engineers.

We build systems where AI handles the low-entropy tasks at the edges, while humans protect the high-entropy core. We design these systems using rigorous telemetry, not intuition. We validate the nodes using the Axiom Cortex Architecture engine. We deploy them in time-aligned Partner Network hubs.

This is the only way to escape the "Velocity Trap." We must stop optimizing for the cost of the hour. We must optimize for the probability of the outcome.

The era of the static org chart is over. The era of the probabilistic graph has begun.

(Source: Nearshore Platformed)

9. Strategic Implementation Resources

For the execution of these topologies, we provide the following deep-link resources for technical evaluation and talent acquisition.

Core Research & Doctrine

- TeamStation AI Research

- Human Capacity Spectrum Analysis

- Sequential Effort Incentives

- Nearshore Platform Economics

Technical Evaluation (Axiom Cortex)

- Cloud & DevOps: aws Assessment, kubernetes Assessment, terraform Assessment, docker Assessment

- Backend: java Assessment, python Assessment, golang Assessment, node-js Assessment

- Frontend: react-typescript Assessment, angular Assessment, next-js Assessment

- Data: data-engineering Assessment, snowflake Assessment, python Assessment

Talent Acquisition Paths

- Hire by Role: hire devops-engineering developers, hire data-engineering developers, hire qa-automation developers

- Hire by Stack: hire react developers, hire node developers, hire python developers, hire java developers

- Hire by Region: hiring in brazil, hiring in mexico, hiring in colombia, hiring in argentina