Human Alignment in the Agentic AI Era

Human alignment for hybrid AI teams: O Ring math, wage equation, and cognitive fidelity for reliable engineering systems.

Designing Team Topologies for Hybrid Human–AI Systems

Abstract

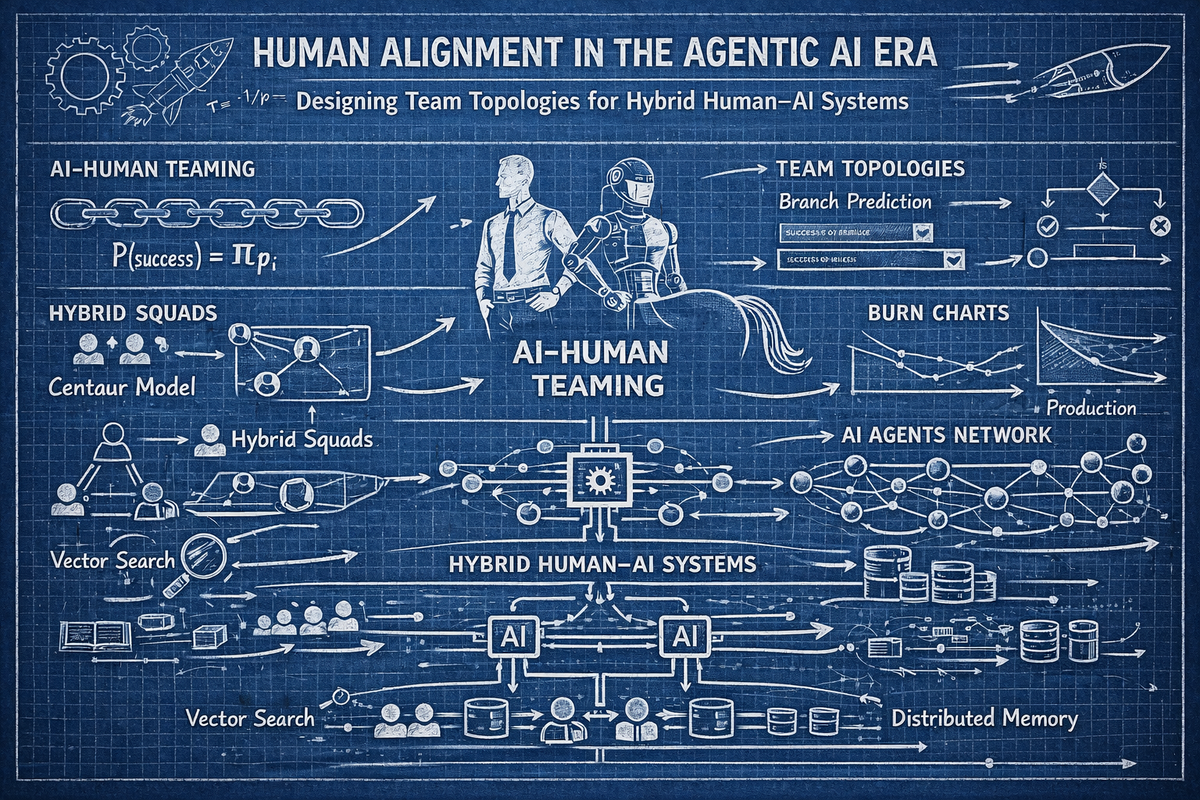

The integration of Agentic Artificial Intelligence into software engineering is not a substitution problem. It is a topological problem. The industry currently views AI adoption through a simplistic lens of labor replacement, assuming that specific roles can be swapped for automated agents without disrupting the surrounding human system. This view is mathematically flawed. Engineering teams function as sequential probability networks. The introduction of deterministic agents into these networks alters the incentive structures, shirking margins, and cognitive loads of the remaining human nodes. This article explores the physics of hybrid Human–AI topologies. We define the "O-Ring Invariant" in the context of agentic workflows. We analyze the "Wage Compression" phenomenon driven by the "Shirking Margin" ($\zeta$). We present a rigorous framework for Human Alignment using the Axiom Cortex™ neuro-psychometric engine to ensure that the humans remaining in the loop possess the specific latent traits required to orchestrate, verify, and govern high-velocity AI systems.

1. The Physics of Sequential Probability

The contemporary discourse regarding Artificial Intelligence and labor markets remains trapped in a philosophical cul-de-sac. Executives ask whether Large Language Models will replace "Software Engineers" or "QA Testers" as if the labor market were a collection of disconnected seats waiting to be swapped out like spark plugs. This view is wrong. Actual teams do not function as bags of isolated tasks. They function as a Sequential Probability Network.

A high-performing engineering team is a chain of dependencies. It is a sequential reactor where value is either added or destroyed at specific gates. The output of the Solutions Architect ($t=0$) becomes the input constraint for the Backend Engineer ($t=1$). The stability of the API ($t=1$) dictates the validity of the Frontend Engineer's work ($t=2$).

In this context, the "job" is irrelevant. The "node" is everything.

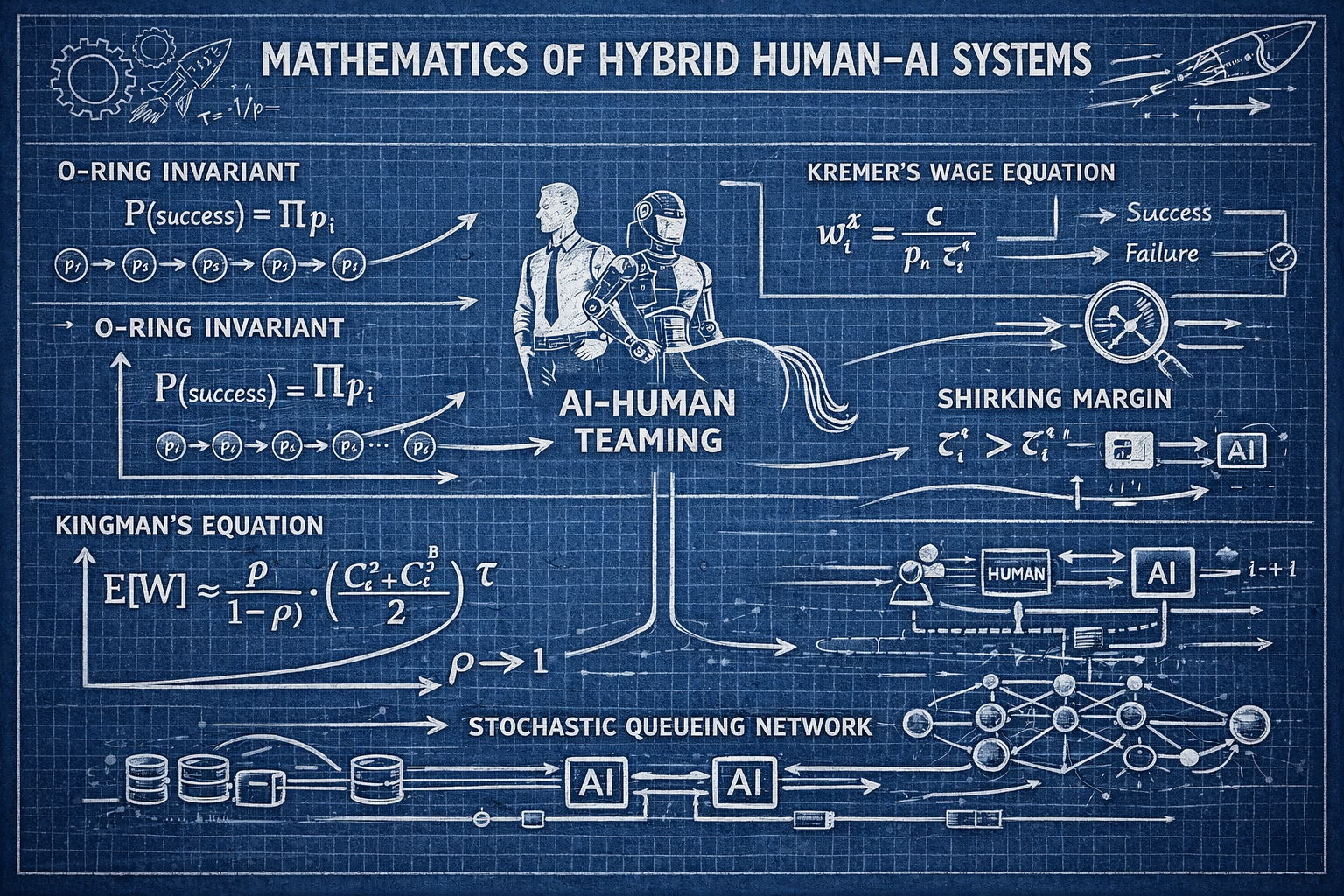

1.1. The O-Ring Invariant and Strict Complementarity

We posit that engineering teams function under the O-Ring Invariant. This concept is adapted from Michael Kremer’s economic theory. Just as the failure of a single component rendered the Challenger disaster inevitable, a failure in a critical upstream engineering node renders downstream brilliance mathematically useless.

In a sequential chain of $n$ workers, the probability of project success ($P$) is the product of the probabilities of success at each node ($p_i$).

$$ P = \prod_{i=1}^{n} p_i $$

If any $p_i$ approaches 0, then $P$ approaches 0. This multiplicative property implies Strict Complementarity. The value of improving one worker's quality depends entirely on the quality of every other worker in the chain. Placing a "10x Engineer" at the end of a chain of junior developers is economic waste. Their multiplier is applied to a base near zero.

This mathematical reality explains the "Seniority Trap" often seen in Why Are Seniors Failing Junior Tasks. Placing a Senior Engineer at the end of a chain composed of Juniors is futile. The Senior cannot fix the foundational entropy introduced upstream. They can only document it.

1.2. The Introduction of Deterministic Agents

AI agents are not "super workers." They are Deterministic Variance Reducers. When we replace a human node with an AI agent, we are injecting a node where $P(\text{Effort}) \approx 1.0$. This certainty acts as a firewall against the propagation of uncertainty.

However, this introduction is not neutral. It changes the physics of the chain. As detailed in our research on Sequential Effort Incentives, automation changes the Shirking Margin ($\zeta$).

If an AI tool guarantees success at Step 3, the human at Step 2 feels safer. Their fear of failure drops. Their incentive to exert high-cost effort drops. Paradoxically, adding reliability downstream can decrease reliability upstream unless wages are raised to compensate. This is the Paradox of Automation.

2. The Incentive Structure: The Wage Equation

To understand why hybrid teams fail, we must look at the mechanics of incentive compatibility. We model the team as a set of rational agents. Each worker $i$ must choose between two actions: Effort ($e_i = 1$) or Shirking ($e_i = 0$). Effort is costly. It incurs a personal disutility $c > 0$.

The principal, interpreted here as a CTO or the Axiom Cortex Engine system, desires Full Effort. To achieve this, the principal must design a topology where effort is the rational choice.

2.1. The Shirking Variable ($\zeta$)

We define $\zeta_i^x$ as the probability that the project succeeds given that worker $i$ shirks ($e_i=0$), under a specific AI replacement policy $x$. This variable is the measure of "Safety" that kills motivation.

If $\zeta$ is high, the worker believes the project will ship even if they do nothing. The AI downstream will catch the error. The automated test suite will fix the formatting. The generative agent will refactor the code. Consequently, the worker's loss aversion no longer drives them to work.

2.2. The Wage Equation

The Incentive Compatibility Constraint (ICC) for worker $i$ requires that the expected utility of working exceeds the expected utility of shirking. Solving for the minimum wage $w_i$ yields the Wage Equation:

$$ w_i^x = \frac{c}{p_n. \zeta_i^x} $$

The denominator ($p_n. \zeta_i^x$) represents the Incentive Margin. It is the difference in success probability created by the worker's effort.

The Impact of AI: As AI secures the downstream stages of the pipeline (e.g., qa-automation Assessment), $\zeta_i^x$ rises. The worker knows the robot will catch the error. Consequently, the term ($p_n. \zeta_i^x$) shrinks. As the denominator shrinks, the required wage $w_i^x$ explodes.

This proves that cheap talent is the most expensive talent in an AI-augmented chain. A worker with a low threshold for effort (or a high cost of effort $c$) will almost certainly shirk when placed upstream of an AI agent. To maintain the O-Ring condition, the human remaining in the loop must possess exceptional Cognitive Fidelity. They must be paid a premium to maintain discipline in the face of safety.

See Nearshore Platform Economics for a deeper analysis of these economics.

3. Cognitive Fidelity and The Turing Trap

If the humans remaining in the loop must be exceptional, how do we identify them? The industry currently relies on "Resumes" and "Keywords." This is a failure of signal processing.

In the era of Generative AI, the "Resume" has lost 99% of its signal value. A junior developer with GPT-4 can generate a resume that looks identical to a Principal Engineer's CV. They can generate code that looks senior. This is the Turing Trap. The "Artifact" (the code) has decoupled from the "Cognition" (the engineer).

3.1. Axiom Cortex™: The Neuro-Psychometric Engine

We reject Boolean keyword matching. We utilize the Axiom Cortex™, a proprietary Cognitive AI-driven engine. This is not a security tool. It is a scientific instrument for talent evaluation. It measures the isomorphism between the engineer's mental model ($M_e$) and the system state ($S_{sys}$).

As stated in Nearshore Platformed:

"The quest for exceptional technology talent represents a defining challenge of our time... Yet, traditional approaches to talent acquisition often fall short, creating friction, delays, and compromises that hinder progress." (Source: Nearshore Platformed)

The Axiom Cortex uses a Latent Trait Inference Engine (LTIE) to derive variables that are not directly observable. We are looking for the "Dark Matter" of engineering talent.

3.2. The Four Dimensions of Capacity

We map every engineer onto a four-dimensional manifold, distinct from their tech stack proficiency. This is the core of Human Capacity Spectrum Analysis:

- Architectural Instinct (AI): The ability to visualize complex systems before code is written. This is a spatial reasoning trait. Engineers with high AI anticipate failure modes in system-design Assessment.

- Problem-Solving Agility (PSA): The velocity at which an engineer traverses the solution space when variables change. High PSA correlates with rapid root-cause analysis.

- Learning Orientation (LO): The derivative of skill acquisition. We measure intellectual honesty. We count "Authenticity Incidents," moments where a candidate admits ignorance.

- Collaborative Mindset (CM): The efficiency of information transfer between nodes.

3.3. Metacognitive Conviction Index (MCI)

To detect the Turing Trap, we measure the Metacognitive Conviction Index (MCI). This gauge assesses how well the candidate's confidence is calibrated with their actual knowledge.

- Expert: High Accuracy + High Confidence + Hedge Markers ("It depends...").

- Prompt Engineer: Low Accuracy + High Confidence (Hallucination).

A senior engineer knows what they don't know. A junior engineer assisted by AI often hallucinates certainty. The Axiom Cortex detects this by measuring the "Hedge Incidents" relative to the accuracy score. This is critical for roles like hire security-engineering developers where false confidence is fatal.

4. Designing the Hybrid Topology

The managerial directive for the Agentic AI era is not to "hire more engineers." It is to design the graph. We must apply Graph Theory to talent acquisition. We hire nodes, not resumes.

4.1. Replacement Kinetics: Who Gets Replaced?

Teams arranged in sequence do not respond symmetrically when automation enters the line. We analyze the Kinetics of Replacement to determine which roles are structurally exposed.

- The End Position (High Kinetics): The end of the pipeline ($i=n$) is the most replaceable. When the last worker shirks, the project succeeds with probability $p_{n-1}$. Adding AI here (e.g., qa-automation Assessment, data-engineering Assessment) yields pure savings. There is no downstream incentive distortion.

- The Middle Position (Low Kinetics): The middle ($i=k$) is structurally protected. Replacing a middle position disrupts the informational link that peer monitoring depends on. If you replace the Integration Architect with an AI, you break the chain of peer pressure. The upstream devs don't know if their code fits. The downstream devs don't know if the specs are valid.

- The Start Position (Medium Kinetics): The start ($i=1$) is augmentable. AI here reduces the variance of the input for the rest of the chain.

4.2. The Centaur Model

We adhere to the Centaur Model (Human + AI). This concept is the new operating system for high-performance engineering. The human's job shifts from "Execution" to "Alignment." The engineer becomes the conductor of a deterministic orchestra.

- Old Skill: Writing syntax. Memorizing libraries.

- New Skill: Agent Orchestration. System Architecture. Verifying AI output.

We are actively vetting for Agentic Orchestration capabilities. We look for engineers who can manage a fleet of agents. The "Architect" is the scarcity. We need people who can define the boundaries within which the agents operate. This is particularly relevant for hire devops-engineering developers and .

4.3. Wage Compression and the Cost of Coordination

The optimal application of AI does not lower wages uniformly. It creates Wage Compression. The "bottom" and "middle" wages must rise to maintain discipline in an automated world.

As detailed in Nearshore Platformed:

"The initial 'savings' on hourly rates get eaten alive by hidden operational costs. You need more project managers, more documentation, and more rigorous QA processes just to bridge the communication and time gaps." (Source: Nearshore Platformed)

In a hybrid topology, the human nodes are the "O-Rings." They are the single points of failure. Therefore, they must be elite. The inequality here is that the specialized human becomes significantly more valuable than the generic human.

5. The Platformed Operating Model

To execute this topology, organizations must move from a "Services" model to a "Platform" model. Traditional staffing vendors operate as pipelines. They have gatekeepers. They add friction.

5.1. Nearshore Platform Economics

We are "Platforming" the industry. By building a software layer (TeamStation AI) that sits between the talent and the client, we decouple revenue from headcount. We create Operating Leverage.

- Data Network Effects: Every candidate vetted feeds the Neural Search Engine. The system gets smarter with every interaction.

- Automated Governance: Compliance, payroll, and device security are enforced by code. This removes the "Compliance Roulette."

- Transparency: We expose the raw data. You see the Axiom Cortex scores. You see the background checks.

5.2. The Geography of Alignment

Geography is a necessary but insufficient condition. Time zone alignment lowers the Cost of Coordination ($c$). In the Wage Equation $w = c / (p. \zeta)$, lowering $c$ allows for a lower wage $w$ while maintaining the same effort $e$.

However, access to the same clock does not guarantee access to the same quality. We use the platform to bridge the gap between "Being Awake" and "Being Aligned." We search for engineers in specific hubs who share the mental models of modern software engineering.

- High-Fidelity Hubs:

- Brazil: Strong in java developers in brazil and .

- Mexico: Deep talent in node developers in mexico and net developers in mexico.

- Argentina: Exceptional python developers in argentina and react developers in argentina communities.

- Colombia: Rapidly growing go developers in colombia and sectors.

5.3. The Immutable Audit Trail

In a low-trust environment, Data is the only currency of trust. The TeamStation AI platform builds an Evidence Locker for every engagement.

- Sourcing Evidence: Why was this candidate selected? (Vector Distance).

- Vetting Evidence: How did they perform? (Axiom Cortex scores).

- Operational Evidence: Are they compliant? (Device logs, security audits).

This immutable audit trail transforms the vendor relationship from "Trust me" to "Verify me." It allows US CTOs to defend their decisions to the board.

6. Conclusion: The Managerial Directive

The integration of Agentic AI is not a license to hire cheaper talent. It is a mandate to hire better talent. The physics of the O-Ring Invariant dictate that as we automate the routine nodes, the pivotality of the remaining human nodes increases.

The map for US CTOs is clear:

- Automate the End: Use AI for QA, Logging, and Data Validation. These roles have high replacement kinetics.

- Protect the Middle: Do not automate the Integration Architect. This node preserves the peer-monitoring incentives.

- Hire for Cognitive Fidelity: Use Axiom Cortex Architecture to measure the isomorphism between the candidate's mind and the system. Reject the "Warm Body."

- Platform the Process: Move away from opaque vendor pipelines. Use TeamStation AI to gain visibility, governance, and data-driven alignment.

We are exiting the era of intuition and entering the era of probability. The future belongs to the Centaurs. The future belongs to the Architects. The future belongs to those who understand the topology of the system.

References

- Nearshore Platformed: Nearshore Platformed: AI and Industry Transformation.

- Axiom Cortex Architecture: AxiomCortex™: Scientific R&D Report.

- Human Capacity Spectrum Analysis: Human Capacity Spectrum Analysis.

- Sequential Effort Incentives: Sequential Effort Incentives.

- Who Gets Replaced and Why: AI & Nearshore Teams: Who Gets Replaced and Why.

- Nearshore Platform Economics: Nearshore Platform Economics.