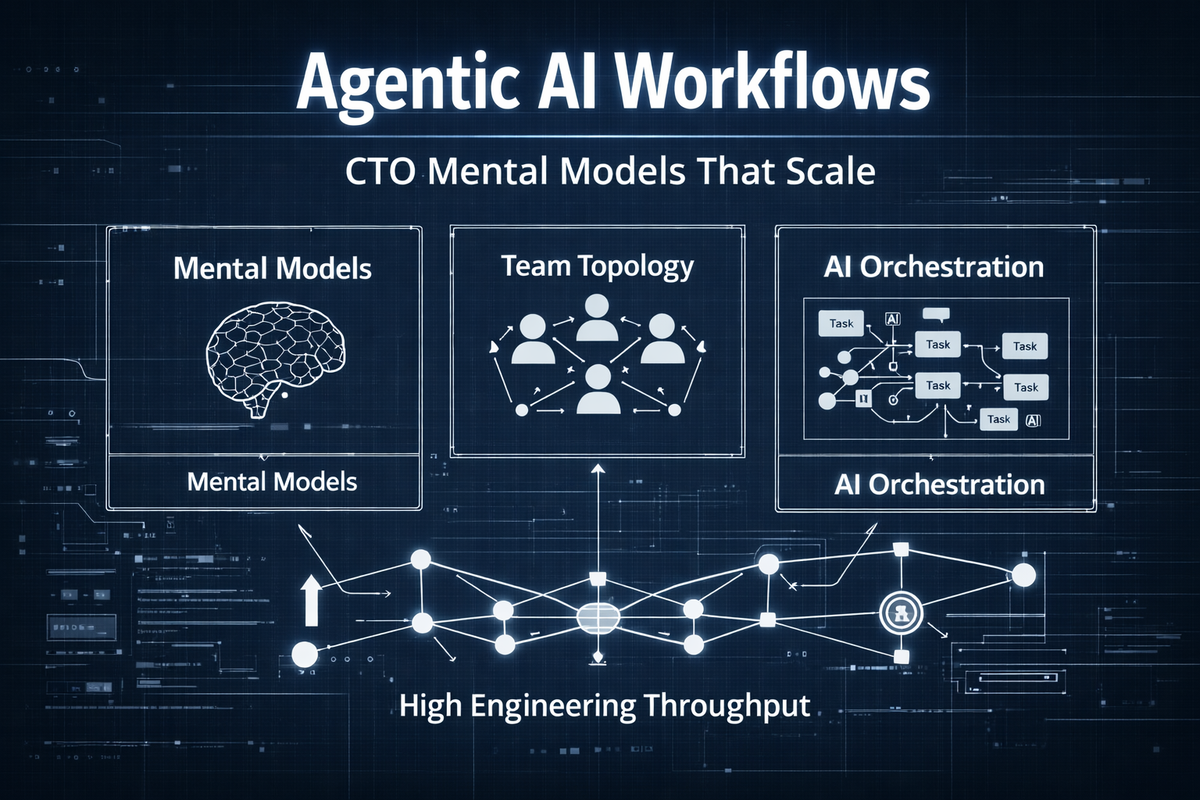

How CTOs can align the right mental shape in their agentic AI dev workflows

CTO guide to agentic AI workflows. Learn how mental models, team topology, and AI orchestration drive real engineering throughput.

The Factory Fallacy and The Latency Horizon

The industry is lying to itself. We treat software engineering like an assembly line. We measure lines of code. We count tickets closed. We track velocity points in a sprint. This is a profound architectural failure. Software engineering is not a deterministic manufacturing process. It is a stochastic queueing network governed by the invisible hand of variance. When a CTO attempts to build agentic AI workflows using industrial era metrics, the system collapses under its own weight. The failure is not the artificial intelligence. The failure is the human cognitive shape orchestrating that intelligence.

You cannot optimize a probabilistic network using deterministic tools. As stated in Nearshore Platformed, "The quest for exceptional technology talent represents a defining challenge of our time." This challenge is magnified exponentially when we introduce autonomous agents into the delivery pipeline. Agents execute with zero variance. Humans execute with infinite variance. When you mix these two states without a rigorous mathematical framework, you generate catastrophic friction. This is the core problem of Nearshore Delivery. We must understand The Synchronicity Physics. We must respect The 4-Hour Rule. We must acknowledge that adding more engineers to a broken topology does not increase output. It increases noise.

Look at the physics of delay. We rely on Kingman's formula to understand the relationship between utilization and latency. If you push a team to 100 percent utilization, you mathematically guarantee infinite delay. A fully booked engineer cannot absorb the variance of an upstream failure. The queue explodes. The delivery stalls. This is why we see the Ritualistic Agile Decay in so many enterprise environments. Teams go through the motions of standups and sprint planning while actual throughput approaches zero. You can read more about this systemic failure in Why Are Stand-Ups Useless and Why Engineering Velocity Collapses.

The O-Ring Invariant and Sequential Probability

Agentic workflows do not operate in isolation. They are sequential chains of effort. What happens at one step shapes the beliefs and risks at the next. We model this using the O-Ring Invariant. In a sequential chain of workers, the probability of project success is the product of the probabilities of success at each individual node. If any single node approaches zero, the entire system approaches zero. Strict complementarity dictates that a single weak link destroys the value of every downstream node.

This is why placing a highly paid senior engineer at the end of a broken pipeline is economic waste. Their multiplier is applied to a base of zero. When we introduce AI into this chain, we alter the incentive structure of the human workers. AI acts as a deterministic variance reducer. It always exerts effort. It creates no moral hazard. But where you place that AI dictates the survival of the team. (Source: [PAPER-AI-REPLACEMENT])

If you automate the end of the pipeline, you capture pure savings. The final worker has no downstream dependencies to distort. If you automate the middle of the pipeline, you break the chain of peer monitoring. Upstream workers realize the middle is safe. They reduce their effort. The required wage to motivate them explodes. This is the mathematical reality of Sequential Effort Incentives. You must protect the middle of your pipeline. You must staff the middle with humans who possess the correct cognitive architecture. You can explore the economic ramifications of this in Sequential Effort Incentives and Nearshore Platform Economics.

Human Capacity Spectrum Analysis

How do we identify the right human nodes for the middle of the pipeline. We stop looking at resumes. Resumes are lag indicators. They document past syntax usage. They fail to predict future system design capability in high velocity environments. We reject the Boolean keyword match. We operate in vector space. We use Human Capacity Spectrum Analysis to model an engineer's potential energy and kinetic availability as a multi-dimensional vector. (Source: [PAPER-HUMAN-CAPACITY])

We decouple skill from capacity. We isolate the latent traits that determine long term value in an agentic workflow. These traits are absolute.

- Architectural Instinct: The ability to visualize complex systems before code is written. This is spatial reasoning. Engineers with high architectural instinct anticipate failure modes and integration friction intuitively. They understand The Monolith Gravity. They know when to decouple.

- Problem-Solving Agility: The velocity at which an engineer traverses the solution space when variables change. High agility correlates with rapid root cause analysis. They do not freeze when the AI hallucinates. They pivot.

- Learning Orientation: The derivative of skill acquisition. Frameworks have a two year half life. Learning orientation is the only durable predictor of relevance. It measures how fast the internal knowledge graph expands.

- Collaborative Mindset: The efficiency of information transfer between nodes. A high capacity individual with low collaborative mindset is a black box sink. They absorb resources but radiate no value to the network. They destroy The Context Membrane.

When you hire for these vectors, you build resilient topologies. You can evaluate these specific capacities through our dedicated research hubs at Human Capacity Spectrum Analysis and AI Augmented Engineer Performance. If you need to deploy this talent immediately, you must target the exact cognitive profile required for the stack. Whether you need to hire react developers or hire python developers or hire golang developers, the vector remains the primary filter.

Axiom Cortex: The Neuro-Psychometric Evaluation Engine

To measure these vectors, we engineered the Axiom Cortex. This is strictly a Neuro-Psychometric Evaluation Engine. It evaluates human talent. It is not a security tool. It is not a firewall. It is not a CI/CD pipeline. It is a scientific instrument designed to eliminate interview hallucination and reduce bias through rigorous mathematical modeling. (Source: [PAPER-AXIOM-CORTEX])

The Axiom Cortex operates on a Self-Governing Phasic Micro-Chunking methodology. We do not evaluate an interview as a single holistic block. That invites the halo effect. We break the interaction into Answer Evaluation Units. Each unit is processed sequentially. Phase 1 handles data ingestion. Phase 2 executes per-question micro-analysis. The engine generates an ideal answer blueprint. It applies forensic NLP and calibration. It scores the axioms based on conceptual fidelity. We measure reasoning. We do not measure vocabulary recitation.

Only in Phase 3 does the Latent Trait Inference Engine synthesize the data. We use nonparametric methods like isotonic regression to model the relationship between evidence vectors and latent traits. We compute Optimal Transport distances between candidate discourse embeddings and ideal blueprint embeddings. We measure and penalize miscalibration using Information Geometry. This is how we achieve absolute precision in talent evaluation.

This engine is deployed across every technical domain. We use it to evaluate system-design Assessment and microservices Assessment. We apply it to node-js Assessment and java Assessment. We measure cognitive fidelity in data-engineering Assessment and machine-learning Assessment. The instrument does not care about the syntax. It cares about the mental shape. You can review the foundational architecture at Axiom Cortex Engine and Axiom Cortex Architecture.

The Micro-Team Topology and Conway's Law

Once you have measured the talent, you must arrange the nodes. Conway's Law dictates that system design mirrors communication structures. If you want a decoupled agentic workflow, you must build a decoupled organization. We enforce The Micro-Team Topology. Small autonomous units. High cohesion. Low coupling. We mandate The Trunk-Based Imperative. Code must be merged to the main branch continuously. Long lived feature branches destroy flow. They create integration hell. You can see the exact failure modes of poor topology in Why Is Integration Hell and Why Adding Engineers Reduces Productivity.

Agentic workflows require strict interface definitions. The interface is a treaty. It must be ratified by both the human and the AI before work begins. If the treaty is broken, the build fails. We use The Feature Flag Topology to decouple deployment from release. We deploy constantly. We release when the business dictates. This reduces the blast radius of any single failure. It enables The Chaos Monkey Mandate. We inject failure into the system intentionally to verify resilience. We do not wait for production to break. We break it ourselves in a controlled state.

This topology requires explicit interaction modes. Collaboration. Facilitation. X-as-a-Service. You cannot have every team talking to every other team. That creates a distributed monolith. Communication overhead scales quadratically. You must restrict the communication pathways to enforce architectural boundaries. You can study these mathematical models in our Team Engineering Topologies.

Security Pillar: The Context Membrane

Agentic workflows introduce massive surface area for security vulnerabilities. AI agents traverse environments, execute code, and access databases. You cannot secure this with legacy perimeter defenses. You must build The Context Membrane. This is a strict architectural security model based on identity and zero trust.

Every human node and every AI agent must be authenticated and authorized continuously. We mandate Single Sign-On (SSO) across the entire delivery pipeline. We enforce strict Identity Provider (IdP) integration. Provisioning and de-provisioning must be automated via System for Cross-domain Identity Management (SCIM). When a human node leaves the network, their access is revoked globally in milliseconds. There is no manual offboarding. Manual offboarding is a critical vulnerability.

Code must be written and executed in secure environments. We deploy Virtual Desktop Infrastructure (VDI) to ensure that source code never rests on a local physical machine. The endpoint is merely a viewing glass. We enforce Mobile Device Management (MDM) on all hardware to guarantee compliance, encryption, and remote wipe capabilities. You cannot build secure agentic workflows if your developers are pulling repositories to unmanaged laptops in coffee shops. You can read the brutal reality of this in Secure Code on a Laptop and Why Governance Doesn't Prevent Risk. We evaluate the capacity to design these secure systems via security-engineering Assessment and hire security-engineering developers.

Platform Economics and The Rework Rate Coefficient

The financial model of traditional IT staffing is fundamentally broken. It is a services business scaling linearly with headcount. It optimizes for input cost. It ignores output value. Billing for hours rewards slowness. Billing for velocity rewards innovation. We operate on Platform Economics. We decouple revenue from headcount. We use deterministic AI governance to manage the network. (Source: [PAPER-PLATFORM-ECONOMICS])

When you hire cheap talent, you maximize the Cost of Delay. A warm body is a net negative producer. They introduce dark technical debt. They consume the time of your senior engineers in review cycles. We measure this via The Rework Rate Coefficient. If a junior engineer produces two units of value but consumes four units of a senior engineer's time, the total output of the system drops. Cheap talent is mathematically the most expensive talent you can buy. We break down this economic reality in Why Cheap Talent Is Expensive and When Does a New Hire Become Profitable.

The true cost of engineering includes procurement, coordination, architecture, tooling, rework, and attrition. You must model the system economics. You must calculate the net topology value of a hire. If the coordination tax exceeds the flow benefit, the hire destroys value. This is why we rely on the Axiom Cortex to predict integration fitness before the hire is made. We simulate the topology shift. We do not guess. We measure.

The Legacy Migration Event Horizon

Organizations are trapped by their legacy systems. The Monolith Gravity pulls all velocity down to zero. Migrating these systems using traditional human effort is statistically impossible. The codebase mutates faster than the migration team can translate it. This is The Legacy Migration Event Horizon. You can read about this stall in Why Is The Migration Stalled and Why Are We Fixing The Same Bug Again.

Agentic workflows offer an escape velocity. But only if the human orchestrators possess the Architectural Instinct to guide the agents. The AI can translate the syntax. The AI cannot redesign the domain boundaries. The human must define the bounded contexts. The human must establish the anti-corruption layers. If you unleash AI on a legacy monolith without a strict architectural mandate, you simply generate a distributed big ball of mud at machine speed. Fixing AI generated garbage costs more than writing it correctly the first time. We document this phenomenon in When Fixing AI Code Costs More.

As stated in Nearshore Platformed, "The solution lies not in incremental fixes, but fundamental redesign." You must re-architect the organization to re-architect the software. You must deploy the Axiom Cortex to find the exact minds capable of this translation. You must evaluate their capacity for event-sourcing Assessment and rest-api-design Assessment. You must ensure they understand terraform Assessment and kubernetes Assessment to build the new infrastructure. You cannot fake this knowledge. The engine will detect the metacognitive gap.

Executing the Nearshore Delivery Mandate

The talent required to orchestrate these agentic workflows exists. It is distributed across the Latin American ecosystem. But you cannot access it using legacy vendor models. The vendor black box obscures the signal. You must use the TeamStation AI platform to penetrate the market with deterministic precision. We map the entire continent using vector embeddings. We track the cognitive fidelity of every node.

If your topology requires specific execution capabilities, we route the demand to the optimal geographic hub. We bypass the local scarcity. We bypass the offshore time zone tax. We enforce The 4-Hour Rule for synchronous overlap. We build the Context Membrane across borders.

When you need to scale your frontend architecture, you do not post a job board ad. You query the platform to react developers in argentina or nextjs developers in brazil. When your backend requires high concurrency and low latency, you target go developers in colombia or node developers in mexico. When you are building complex data pipelines, you align with python developers in chile or python developers in peru. The geographic location is merely a coordinate in the vector space. The cognitive shape is the primary key.

We evaluate the specific framework capacities using the Axiom Cortex. We run the react-typescript Assessment assessment. We run the python Assessment assessment. We run the golang Assessment assessment. We verify the signal. We eliminate the noise. We deliver the node to your topology ready to integrate.

The Definition of Ready and The Incident Response Symmetry

Agentic workflows demand absolute rigor in task definition. The Definition of Ready must be mathematically precise. If a human feeds ambiguous requirements to an AI agent, the agent will hallucinate a solution. The variance explodes. We enforce strict contract definitions before any code is generated. The human node must possess the Problem-Solving Agility to deconstruct the business requirement into deterministic logical constraints.

When the system fails, and it will fail, we rely on Incident Response Symmetry. The team that builds the workflow must operate the workflow. We kill Mean Time To Innocence. There is no separate operations team to blame. We democratize observability. We share the telemetry. We optimize for Mean Time To Recovery. We enforce the revertability principle. Every change must be reversible. We use feature flags to decouple deployment from release. We evaluate this operational maturity through prometheus Assessment, grafana Assessment, and ci-cd Assessment.

You cannot build resilient systems with fragile minds. You must measure the metacognitive conviction index. You must know if your engineers hallucinate certainty. You must know if they can whiteboard the architecture under pressure. We explore this necessity in Can They Whiteboard Architecture and How Fast Can They Find Root Cause.

The Executive Conclusion

The era of intuition is over. The era of probability has arrived. CTOs can no longer afford to guess at the cognitive shape of their engineering teams. Agentic AI workflows amplify every structural weakness in your organization. If your topology is broken, the AI will accelerate the collapse. If your incentives are misaligned, the AI will maximize the cost of delay.

You must adopt a quantitative mindset. You must treat human talent as nodes in a probabilistic network. You must measure their capacity using the Axiom Cortex. You must enforce the Context Membrane using strict security architecture. You must align your operations with Platform Economics. You must leverage the Nearshore Delivery model to access the required vectors without the friction of legacy outsourcing.

This is the engineering physics of the modern enterprise. We do not guess. We measure. We align. We execute. Explore the foundational research at TeamStation AI Research and the main platform capabilities at TeamStation AI. The tools to build the future are available. The mandate is yours to execute.